This week in tech: 4.05.2026

Summary of AI developments - made for busy people

APPLICATIONS

I can’t even: the European Commission announced the highest safest bestest privacy standards in the world - an age verification app to protect the kids from social media. By Thursday, those standards had been defeated. With a text editor.

Did they solve 3D generation? Say hello to AnyRecon:

Overcomes the limitations of traditional models by processing arbitrary, unordered sets of images - up to 200 views.

Hybrid Architecture: combines a video diffusion backbone with a persistent global scene memory and explicit 3D geometric cues

Utilizes a geometry-driven retrieval mechanism and sparse attention blocks to maintain computational efficiency while preventing drift or blur across long trajectories.

Project page: https://yutian10.github.io/AnyRecon/

HF model page: https://huggingface.co/Yutian10/AnyRecon

Repo: https://github.com/OpenImagingLab/AnyRecon

Paper: https://arxiv.org/abs/2604.19747

Researchers trained Talkie: a 13B model fed only pre-1931 data and vintage etiquette guides to simulate historical conversational styles. This is pretty cool in its own right, but the really remarkable part is that he model can generate functional Python code by identifying and adapting structural logic and mathematical patterns found in early literature.

https://talkie-lm.com/introducing-talkie

BUSINESS

All your data is belong to us - Musk edition. SpaceX wants to acquire Cursor for USD 60B - presumably for the user base, not the tech itself (VS Code fork). AI has currently zero presence in the segment - the big three of Claude, Gemini, Codex dominates.

https://www.theverge.com/ai-artificial-intelligence/915626/yelp-ai-assistant-chatbot-major-upgrade

Musk suing Altman for turning OpenAI into a for profit org is quite possibly the OG of soap operas in tech. After months of propaganda war, the two sides are meeting in court.

https://www.bbc.com/news/articles/cz027nyz529o

Jeff Bezos decides that chatbots were for suckers - he’s going after the physical market:

Co-founded Project Prometheus in Nov 2025 and serves as co-CEO

The company focuses on “Physical AI” : building models for real-world tasks in manufacturing, engineering, aerospace, and robotics

Startup reached a reported USD 38b valuation in 5 months

Bezos apparently has a broader strategy to combing AI with industrial acquisitions, aiming to create an AI-first manufacturing conglomerate.

https://techfundingnews.com/project-prometheus-bezos-funding-38b-valuation-physics-ai/

I have a confession to make: I low-key admire the chutzpah of Amodei and his minions.

They quit OpenAI because GPT-2 was too dangerous to be released to the public - and then proceed to build way more powerful models for years on end.

In order to build said models, they steal anything that isn’t nailed to the floor - and then hunt people down for using Claude Code CLI to replicate the Claude Code CLI codebase THAT THEY THEMSELVES LEAKED.

Speaking of leaks: they build a Mythos model, super awesome cyber security powerhouse, claim it’s too dangerous to be released to the public, share it with governments - and then get this awesome tool gets hacked.

https://www.bbc.com/news/articles/cy41zejp9pko

Is there a single company left that is not trying to harvest data? A pasta producer Prego has launched a “Connection Keeper Bundle” - a dedicated recording device that captures and “preserves conversations, laughter, and memories shared at the table”.

If we want things to stay as they are, things will have to change: Jensen Huang says that AI will boost productivity and ultimately create more jobs - but workers might end up micromanaged by AI systems. Having dealt with multiple carbon versions of such problem, I can only sigh.

https://futurism.com/artificial-intelligence/nvidia-ceo-ai-micromanaging-boss

Stealing IP is bad, unless it’s good - listens, it’s all about who does it, ok?

The White House issued a memo warning that foreign actors (= China) are attempting large-scale “adversarial distillation” of U.S. AI models.

The plan includes sharing threat intelligence with American AI firms, improving private-sector coordination, and developing best practices to detect and defend against these activities. Long live the military-industrial-tech complex.

The government is also considering accountability measures against offenders - which is par for the course (ROTFL) given the historic US conduct wrt e.g. the topic of Israeli nukes.

Read the memo: https://whitehouse.gov/wp-content/uploads/2026/04/NSTM-4.pdf

CUTTING EDGE

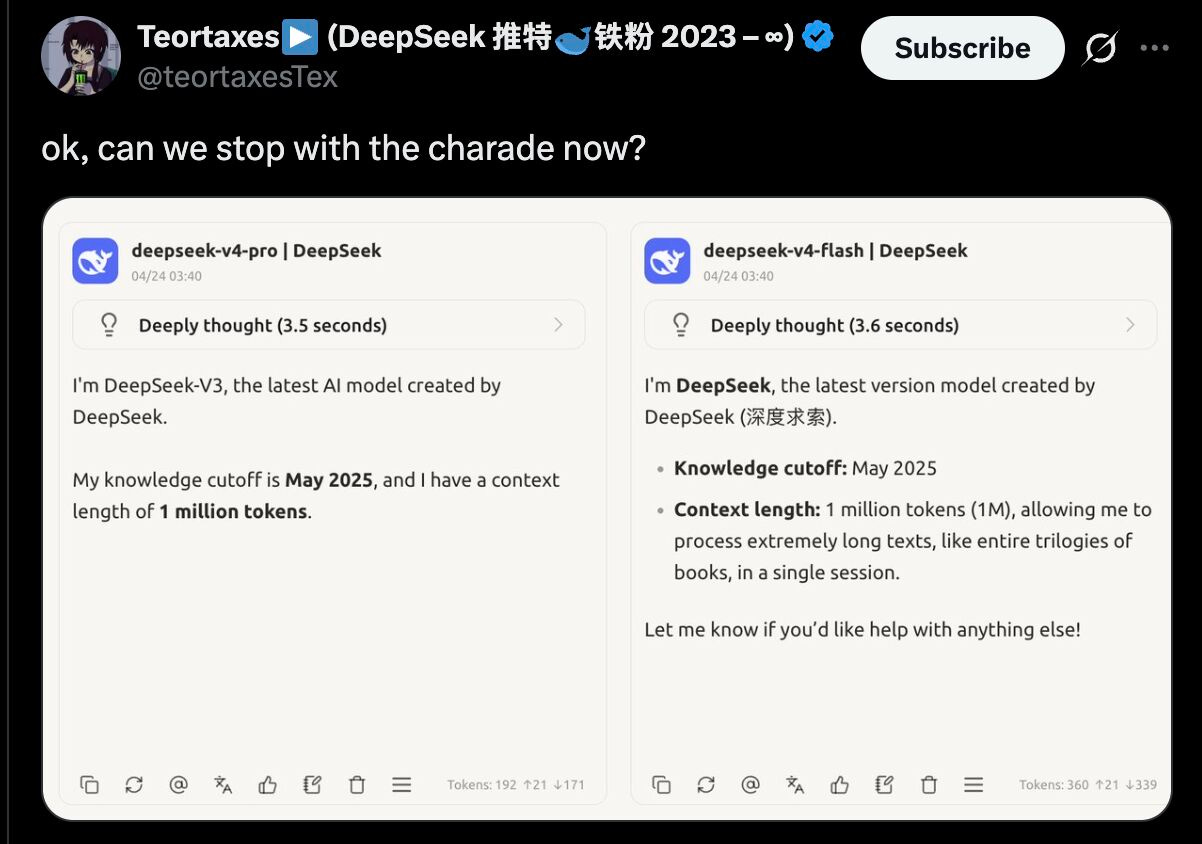

DeepSeek strikes again: V4 is here

1M token context length via hybrid attention

Two variants: V4-Pro (1.6T total / 49B active params) and V4-Flash (284B / 13B active)

SOTA open-weights performance: on par with leading models on coding, math, and benchmarks like LiveCodeBench and Codeforces

MIT license - weights, documentation, and Hugging Face integration

NO CUDA dependency - runnable on alternative hardware like Huawei Ascend.

Let me say that again: NO CUDA DEPENDENCY

HF model page: https://huggingface.co/deepseek-ai/DeepSeek-V4-Pro (tech report with all the innovations - included)

Oh yeah: and it looks it was trained on the reasoning traces from V3. Do those people have baron Munhausen as a scientific advisor?

OpenAI shipped GPT 5.5:

Focused on autonomous, multi-step task execution

Live in ChatGPT/Codex, API access incoming

It leads key benchmarks (Terminal-Bench 2.0, FrontierMath Tier 4, long-context retrieval) - but Opus 4.7 still tops SWE-Bench Pro and MCP Atlas.

Performance gains include stronger reasoning, coding, and efficiency (same latency, fewer tokens), plus early research impact like a verified math proof.

Announcement: https://openai.com/index/introducing-gpt-5-5/

FRINGE

Ben Horwitz launched Sinceerly, an “anti-Grammarly” for Gmail: the tool deliberately makes emails messier and more human. It injects typos, shortens phrasing, and removes m-dashes / the “it’s not X, it’s Y” telltale, among others.

Users can dial the effect - and the fact the least polished version is called “CEO style” is a cherry atop this particular cake.

RESEARCH

DELEGATE-52 is a new benchmark that tests how well LLMs handle long, delegated document-editing workflows across 52 professional domains (tbh the very fact that people just go yolo on their documents is making my brain throw a bluescreen). Results? Even top models significantly degrade documents over time corrupting 25pct of content on average while tool use does not meaningfully improve outcomes. Current LLMs are clearly unreliable for delegation, introducing serious errors that accumulate and worsen with longer tasks / more complex contexts.

Paper: https://arxiv.org/abs/2604.15597

People have been criticizing deep learning models as a learning paradigm for years, and a recurring theme has been a comparison to how children learn: a young one only needs to see a few cats and dogs to be able to tell them apart, whereas a decent classifier built from scratch needs hundreds of examples. With the new paper from Stanford, thing are getting interesting: the authors trained models on first-person child video and lo and behold, the model works.

The framework is called Zero-shot Visual World Model (ZWM) and it mimics how children infer physical reality. The core idea is to separate appearance from dynamics and use causal reasoning to generalize across tasks. The results are promising - we might finally have a path toward data-efficient, flexible AI systems that learn and reason more like human cognition.

Paper: https://arxiv.org/abs/2604.10333

Image generation and detection have advanced quickly but along separate paths: roughly speaking generative vs. discriminative. Growing use of adversarial signals has been hinting at potential synergy between the two - enter UniGenDet. The authors propose a unified framework with shared attention and fine-tuning, which allows generation and detection to co-evolve and improve each other.

Paper: https://arxiv.org/abs/2604.21904

Repo: https://github.com/Zhangyr2022/UniGenDet

One of the challenger of global models for high-dimensional multivariate time series forecasting is capturing heterogeneous predictive structure: this paper handles via adaptive pooling. The authors propose a validation-driven framework that groups time series by out-of-sample predictive performance and not representation similarity; cluster assignments are updated iteratively updating to minimize predictive risk.

Paper: https://arxiv.org/abs/2604.13748

Conformal Inference is awesome because it actually does what confidence intervals claim to be doing - sadly, it cannot handle distribution shift very well. Adaptive Conformal Inference (ACI) maintains coverage under distribution shift, but cannot correct bias, often widening intervals instead. Enter Bias-Corrected ACI (BC-ACI) : adds an online bias estimate to re-center intervals and fixes miscalibration at its Across multiple experiments, BC-ACI cuts interval error by 15pct on average under shifts while preserving performance on stable data.

Paper: https://arxiv.org/abs/2604.13253