This week in tech: 23.03.2026

Summary of AI developments - made for busy people

APPLICATIONS

“Every day, Michael Geoffrey Asia spent eight consecutive hours at his laptop in Kenya staring at porn, annotating what was happening in every frame for an AI data labeling company. When he was done with his shift, he started his second job as the human labor behind AI sex bots, sexting with real lonely people he suspected were in the United States. His boss was an algorithm that told him to flit in and out of different personas.”

This is what the H in RLHF really looks like. Read the whole thing. You will not enjoy it.

https://www.404media.co/ai-is-african-intelligence-the-workers-who-train-ai-are-fighting-back/

It was just a matter of time: we are getting killer robots… Sorry, I wanted to say: AI soldiers. Yay for progress.

https://time.com/article/2026/03/09/ai-robots-soldiers-war/

Remember Meta AI glasses, i.e. the answer to all the creeps prayers? A new open-source app called Nearby Glasses can detect the radio “fingerprint” of smart glasses and alert users when such devices are operating nearby. The project aims to help people identify potential covert recording in their surroundings as wearable tech becomes more widespread. Sadly it can’t pinpoint the exact wearer yet, but once it does? Here’s an absolutely obvious complementary device: your personal robot bodyguard that punches the creep in the nose.

You’re welcome.

Repo: https://github.com/yjeanrenaud/yj_nearbyglasses

OpenAI remembered they do stuff beyond helping build Skynet and flooding the media with Altman’s ramblings about AGI:

Say hello to GPT-5.4 mini and nano: smaller models designed to deliver strong coding performance and faster execution in agent-based workflows.

Near-flagship performance at higher speed: 2× faster, improved tool use, UI reasoning, and overall coding efficiency.

Optimized for multi-model agent systems: larger models can plan tasks while mini or nano handle execution steps like code search, debugging, file edits

Mini targets complex coding and tool workflows, while nano focuses on high-throughput, low-cost lightweight subagent tasks.

https://openai.com/index/introducing-gpt-5-4-mini-and-nano/

Mistral has launched Forge:

The new platform enables companies to build & run AI models entirely on their own internal data / infra

Forge trains on internal code, documents, logs, and structured data, aligns outputs with company policies and benchmarks

Supports dense or mixture-of-experts architectures plus multimodal inputs.

Key point: sensitive data never leaves their environment.

Automated deployment loop - with agents ofc, because it’s 2026

YOLO AI is the new black: Manus has introduced “My Computer” - a feature that allows its AI agent to operate directly on users’ local devices. The agent can manage files, run command-line tasks, control apps on macOS and Windows, and use GPUs for more demanding workloads. What could possibly go wrong.

https://manus.im/blog/manus-my-computer-desktop

Palantir gave a new demo of its military tools: the spin goes that military targeting once relied on soldiers manually reviewing drone footage and coordinating decisions across multiple disconnected systems using slides, whiteboards, and paperwork. With Palantir’s AI tools, identifying targets can now be done in a few clicks, as the system flags threats, suggests weapon choices, and learns from each operation to improve future actions. First results are already in:

https://blog.palantir.com/maven-smart-system-innovating-for-the-alliance-5ebc31709eea

OpenClaw is not going away anytime soon, now that Nvidia has decided to join the party:

NemoClaw is a secured infrastructure layer for OpenClaw: adds guardrails, privacy controls, and safety features while preserving autonomous agent capabilities. In other words, you can do your part to help bring about the Butlerian Jihad - with a corporate stamp of approval.

The system can still communicate via text, email, and voice.

CEO Jensen Huang calls agent platforms “the new Linux” (WTF), which suggests NVIDIA push toward on-device AI deployments.

https://www.nvidia.com/en-us/ai/nemoclaw/

BUSINESS

“This time it’s different” is one of the more terrifying sentences in English, but it doesn’t prevent it from being true at times - for instance when it comes to acquisitions. Sure, there’s always been huge business gobbling up everything in sight, eventually they were disrupted by a hungry upstart… Creative disruption at its finest, right? Except AD 2026 the big ones have enough money to buy everybody out and prevent even the emergence of a competition - because let’s be honest, everyone has a breaking point and Big Tech has pockets deep enough to reach it for most of us. Why this semi-philosophical intro? Because OpenAI just bought Astral - the people who created uv (my personal fav) and ruff. The great unwashed are not happy:

Astral built commercial value by re-implementing years of volunteer-driven Python ecosystem work (often in Rust) - which, ironically, is perfectly in line with the operating model of Altman and his minions.

The move does nothing to reduce anxiety about big tech absorbing key developer infrastructure after benefiting from open-source contributions

Everything about AI is dangerous unless it’s made by Anthropic - that’s the long and short of the business model of Amodei et al. The most recent move is Claude Partner Network:

USD 100M initiative aimed at accelerating enterprise adoption through “consulting and AI implementation partners” - sounds a lot better than vendor lock-in.

Funding will support training, co-marketing, sales resources, and expansion of “partner-facing” engineers and technical architects.

https://www.anthropic.com/news/claude-partner-network

Yann LeCun has been complaining about LLM being a dead end street for AI before he joined Meta, while he was at Meta, and he continues to do so in his current Meta-free state. Apparently there are investors willing to listen: his startup Advanced Machine Intelligence has raised USD 1B to pursue “world models” that learn directly from real-world dynamics (and not text-based prediction):

AMI aims to build AI that understands causality and physical reality, addressing compounding errors, hallucinations, and limitations of next-token prediction in complex environments. No word on whether they will also cure cancer or impeach Agent Orange.

They will use JEPA-based architecture: compressed latent representations to model concepts, physics, and spatial relationships more efficiently than predicting raw pixels/words.

AMI plans near-term applications in complex industries like autonomous driving and robotics.

https://techcrunch.com/2026/03/09/yann-lecuns-ami-labs-raises-1-03-billion-to-build-world-models/

OpenAI getting sued by a dictionary - well, the dictionary: Merriam Webster, the publisher of Encyclopaedia Britannica, is claiming ChatGPT scraped nearly 100k articles without permission (plausible, to say the least), which violates trademark law when hallucinations falsely attribute content to Britannica.

https://techcrunch.com/2026/03/16/merriam-webster-openai-encyclopedia-brittanica-lawsuit/

If you are not paying, you are the product - exhibit 1234:

Remember Pokemon GO? The whole thing produced a massive crowdsourced real-world dataset: hundreds of millions of photos with movement data annotated movement data

This allows Niantic to build super detailed 3D maps and visual positioning systems.

The company reportedly partnered with robotic delivery firm Coco Robotics to use the collected imagery + location insights for robot navigation.

Digital platforms can double as large-scale data-collection systems users (knowingly or not so much) help train - and technically speaking it’s legal, because it’s mentioned on page 9999 of the EULA that nobody reads.

CUTTING EDGE

I am not sure how I feel about this one: amazed, terrified, confused? Whichever it really is, the whole thing is impressive from a technical standpoint:

AutoResearchClaw automates the full scientific workflow: from a single CLI idea prompt, it runs a 23-stage loop covering literature review, experiment execution, and paper writing end-to-end.

It searches and verifies real citations, generates code in a sandbox, and produces structured papers with charts

Users can approve steps manually or enable full auto-mode to receive a complete draft, verified references, and reproducible experiment scripts.

Repo: https://github.com/aiming-lab/AutoResearchClaw

Autoresearch repo: https://github.com/karpathy/autoresearch

FRINGE

Across the pond in the US of A a woman was wrongly arrested after an AI system falsely identified her as a suspect in a financial scam. She was flown across the country, held in jail for months, and only cleared when authorities realized she had an alibi and had never even been to the state where the crime occurred. By the time she was released (just before Christmas), she had lost her home, car, and dog, and - to add insult to injury - reportedly received no assistance for her return home.

Good thing it could never happen in Europe, because AI Act forbids such practices. Oh wait: there is annex III - points 1a and 6c, to precise. Nevermind.

https://www.theguardian.com/us-news/2026/mar/12/tennessee-grandmother-ai-fraud

I am worried about the dystopian horror slowly unfolding, with robot enforcers of a new world order - but that’s not to say funny stuff won’t happen along the way. In China, a Unitree G1 robot was “arrested” after startling a 70yo lady by repeatedly raising its arms behind her. Police officers escorted the clanker stalker away - hopefully to a scrap yard.

https://www.geo.tv/latest/655936-robot-arrested-in-china-after-terrifying-elderly-woman

RESEARCH

Residual connections have not changed much since ResNet (2015 - feels like a lifetime ago, doesn’t it) - researchers at Kimi decided that enough is enough:

The issues with standard residual connections is that they sum every layer’s output, which creates a “PreNorm dilution” - an unweighted mixture where useful early information gets buried and deeper layers must amplify themselves to have impact.

Kimi people propose to fix it: replace fixed summation with softmax attention over previous layers, so each layer selectively retrieves the representations it needs

The idea has legs: tests indicate improved performance reasoning/math/code benchmarks, and suggest better depth-scaling

Paper: https://arxiv.org/abs/2603.15031

Repo: https://github.com/MoonshotAI/Attention-Residuals

Omni-Diffusion is a new any-to-any multimodal language model: it replaces standard autoregressive generation with mask-based discrete diffusion. By modeling the joint distribution of tokens across {text, speech, images}, it enables conversion and interaction between modalities within a single unified framework. The OD architecture matches / surpasses existing multimodal systems on several popular benchmarks (cue the mandatory grain of salt).

Paper: https://arxiv.org/abs/2603.06577

Project page: https://omni-diffusion.github.io/

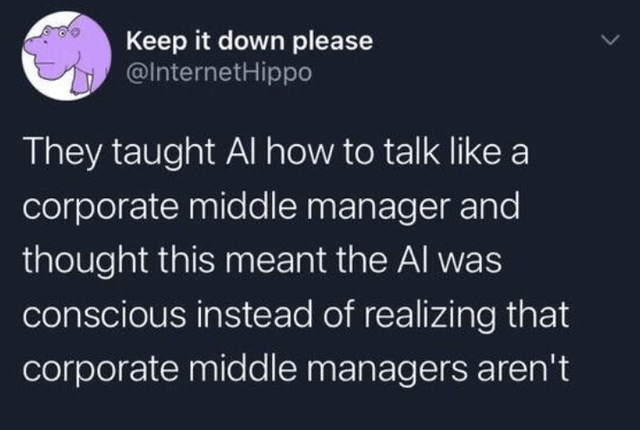

People calling what LLM do “reasoning” has long been a pet peeve of mine - and by pet peeve, I mean a psychotic hatred. Turns out I am not the only one, except the authors of this research went about it in a more systematic fashion. To wit: most chain-of-thought steps in LLMs have little causal impact on the final answer - only a small fraction meaningfully influence predictions. Translated to plain English, most of the apparent “reasoning” (or: self-verification moments) are decorative rather than functional. The paper introduces a True Thinking Score and identifies a latent “TrueThinking direction” that can steer whether models attend to / use specific reasoning steps.

Disclaimer: results so far are limited to smaller models and may not generalize with scale.

Paper: https://arxiv.org/abs/2510.24941