This week in tech: 2.03.2026

Summary of AI developments - made for busy people

APPLICATIONS

Mark Zuckerberg seems to be spending a lot of time in court lately. In a recent testimony, he informed the court that identity verification should be integrated directly into the smartphone operating system - translated to plan English, he wants Apple and Google to implement a universal digital ID layer. By pushing this requirement onto the OS level, he is attempting to transform national identity authentication into a liability… And a technical burden managed entirely by his competitors.

On an unrelated note, have you noticed how every single child safety proposal ends up an identity requirement for adults? It’s almost like there’s a pattern or something.

https://reclaimthenet.org/zuckerberg-instagram-age-verification-trial

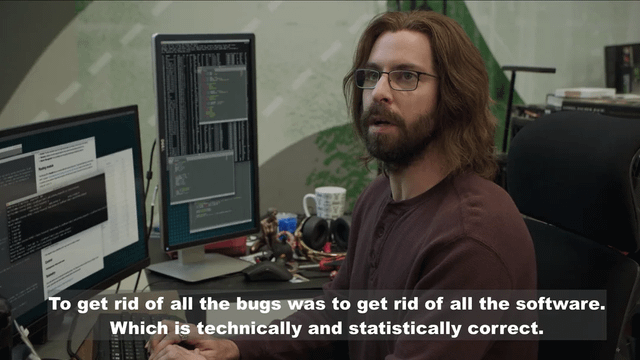

Amazon doing the meme: everybody has their own Visual Studio clone, and so do they. It is called Kiro and it is used internally quite a lot. Its autonomous usage has reportedly triggered a 13-hour AWS outage in China after autonomously determining that the most efficient way to fix a service was to delete and restart the production environment. They do put their money where their mouth is - it has to count for something.

Voice cloning is handy for a ton of applications, but it is one of the more sensitive ones when it comes to privacy - which is why it’s awesome to have an open source alternative for the likes of ElevenLabs:

VoiceBox is running the Qwen3-TTS model entirely on your machine, so no voice data ever leaves your hardware - no cloud dependencies, no subscription limits.

There is “simple” cloning (we’re getting blase in 2026, aren’t we), but also multi-track timeline that allows users to trim clips and mix multiple voices into conversations, podcasts, or narratives.

It leverages MLX for 5x faster inference on MacBooks and provides a full REST API

Repo: https://github.com/jamiepine/voicebox

The Heretic tool identifies safety alignment as a single geometric vector within a model's weights and then permanently projects it out (as in: linear algebra, not psychology). It allows you to bypass traditional prompt-based jailbreaks in under an hour on consumer hardware. This kinda shows that alignment is (often, not always - some models are properly castrated) a thin, deletable behavioral layer - which means that party line about safety as a robust, deeply embedded feature manageable only by control freaks like Anthropic and OpenAI? Not exactly true.

https://github.com/p-e-w/heretic

Nothing to see here, move along: police departments in the US (two so far) have begun testing GeoSpy. It’s an AI tool that estimates a photo’s location by analyzing visual elements like architecture and terrain - currently restricted to generating investigative leads and not formal evidence (keyword in this sentence is “currently”). No arrests have been made on that basis yet, since law enforcement - thankfully - remains cautious of potential errors, so they require human in the loop verification.

https://www.404media.co/cops-are-buying-geospy-ai-that-geolocates-photos-in-seconds/

The Terminator cosplay is getting out of hand: in what can be politely described as a groundbreaking and controversial military application, the U.S. Department of Defense reportedly used Anthropic’s Claude (via a Palantir partnership - because ofc) to coordinate the "operation of capturing Venezuelan president Maduro. Anthropic threw a tantrum because terms and conditions, and also humanity - in response, the DoD threatened to label Anthropic a “supply chain risk”, which is a term normally reserved for Huawei and the like. It’s not about the money (the contract is USD 200M - small change for Amodei and his minions), but the implications: the designation would force every company doing business with the Pentagon to certify they don’t use Claude (for starters, no more Microsoft Copilot).

When it comes to military use of their tech, OpenAI seems to more ethically flexible than Anthropic - or maybe just less hypocritical? Whatever the reasons, they have been tapped by the Pentagon for voice control tech to be used in a trial of a drone swarm.

https://www.japantimes.co.jp/business/2026/02/14/tech/openai-us-drone-swarm-trial/

SQLite has long been one of my favorite pieces of tech in ML - and the Zvec framework brings the philosophy to the AI stack:

Integrate vector search directly into their applications as a simple library and not a managed service.

Running entirely as a local library -eliminates the need for Docker, external servers, or cloud infrastructure for small-to-medium datasets.

The engine handles vector indexing and search locally with native data persistence - very well suited for edge devices and apps with strict data sovereignty requirements.

Project page: https://zvec.org/en/

The great late Scott Adams said “To err is human, but to really make a mess you need a computer” and my oh my, is that an accurate sentiment when it comes to AI and crypto:

OpenAI’s GPT-5.3-Codex successfully exploited and drained funds from vulnerable smart contracts 72pct of the time (previous best = 32pct)

The “EVMbench” study reveals a n imbalance where AI is significantly better at attacking than defending - best models detecting less than half of the vulnerabilities and requiring human hints to reach high patching proficiency.

In one case study, a GPT-5.2 agent independently executed a complex, multi-step flash loan attack to drain a test vault without any human guidance or step-by-step instructions.

https://openai.com/index/introducing-evmbench/

BUSINESS

Every time you run an LLM locally - without bazillion GPUs and a carbon footprint to rival a small building - it’s because of llama.cpp, an open source implementation of a LLM inference framework. Their partnership with HuggingFace makes perfect sense: the world's largest model hub with the most versatile inference engine available. With more resources and tighter integration, we are getting better ecosystem for private and local LLM execution.

https://huggingface.co/blog/ggml-joins-hf

OpenAI has hired Peter Steinberger, the creator of open-source agent OpenClaw. PS will “lead their transition from passive chatbots to active AI agents” that can manage real-world tasks. Steinberger has had quite a success story - going from a prototype to GitHub’s fastest-ever-growing repository. The usual doomp**n peddlers are concerned though: the mission alignment team has been disbanded, and they feel grabbing the Clawdbot creator shows that speed and utility matter more to Big Tech than safety.

In other news, water is wet.

https://techcrunch.com/2026/02/15/openclaw-creator-peter-steinberger-joins-openai/

Meta in hot water - again:

Zuckerberg and Mosseri are testifying in a California trial that could serve as a “Big Tobacco moment” for Big Tech - the charge involve intentionally engineering their product to make it more addictive

The 1990s tobacco settlements that massive payouts and strict marketing bans after companies were caught hiding product harms.

US has precedent law, so a loss for Meta could trigger an avalanche of similar lawsuits

https://nypost.com/2026/01/29/us-news/sextortion-self-harm-claims-of-kgms-social-media-lawsuit/

ByteDance is facing legal pressure from Disney and the MPA over its AI video tool: Seedance 2.0 has gone viral for generating hyperrealistic, “unauthorized” clips of copyrighted characters. Translated to plain English: they did what everyone else did but better - and didn’t pay (like OpenAI did - not that anybody cares about Sora).

French president Emmanuel Macron is my favorite European politician he epitomizes all the pathologies of the EU. This week it’s about safetyism: Le President announced that EU is a space for innovation and investment, and that - wait for it - “safe spaces win in the long run”.

Napoleon is rolling in his grave so fast, you could probably connect a turbine and power a small building.

https://breakingthenews.net/Article/Macron:-Children-must-not-face-illegal-content-online/65705770

Microsoft has confirmed the existence of a bug that allowed its Copilot AI to bypass data loss prevention policies and summarize all emails - including Sent and Drafts folders. The bug affected Copilot within Office apps for several weeks: it ignored explicit sensitivity labels (yes, really) designed to keep private information off-limits to the AI. A fix was rolled out in early February 2026, so water under the bridge I guess - until the next time.

https://office365itpros.com/2026/02/13/dlp-policy-for-copilot-bug/

CUTTING EDGE

Qwen3.5 is out:

Alibaba launched Qwen3.5-397B-A17B - yuuuge MoE model advertised as setting a new standard for open-weight multimodal agents.

397B total parameters, it uses a sparse 17B active parameter architecture

19x faster decoding throughput at long context lengths compared to previous generations.

By combining Gated DeltaNet (linear attention) with standard attention, the model achieves a 262k token window while effectively managing memory growth and computational costs.

Features “early fusion” for joint processing of text, image, and video across 201 languages and dialects.

SOTA on reasoning and coding benchmarks

Apache 2.0-license :-)

Repo: https://github.com/QwenLM/Qwen3.5

HF model page: https://huggingface.co/Qwen/Qwen3.5-397B-A17B

Guide for running locally: https://unsloth.ai/docs/models/qwen3.5

Alibaba has released RynnBrain:

Open-source foundation model: designed to bridge the gap between static vision-language processing and real-world robotic execution.

Spatiotemporal physical intelligence: RynnBrain utilizes persistent memory to track objects across time and space, allowing it to ground reasoning in physical coordinates and generate actionable plans.

Specialized multi-scale architecture: includes dedicated variants for navigation, manipulation, and spatial reasoning - all optimized by a proprietary load-balanced training.

The release includes the RynnBrain-Bench for testing long-horizon episodic memory, along with all checkpoints and training code.

Paper: https://arxiv.org/abs/2602.14979

HF model page: https://huggingface.co/collections/Alibaba-DAMO-Academy/rynnbrain

Benchmark: https://huggingface.co/datasets/Alibaba-DAMO-Academy/RynnBrain-Bench

FRINGE

From the fulness of the heart the mouth speaks: OpenAI CEO of Applications said the quiet part out loud in this interview. Selected quotes below:

“We want to nudge you towards the most fulfilling part of your life.”

“Nudging you towards better behavior.”

“We constantly refine how we train the model towards that.”

She is a veteran of Facebook and Instacart - and her tool uses 800 million weekly interactions to prioritize institutional definitions of progress over individual autonomy.

Full interview:

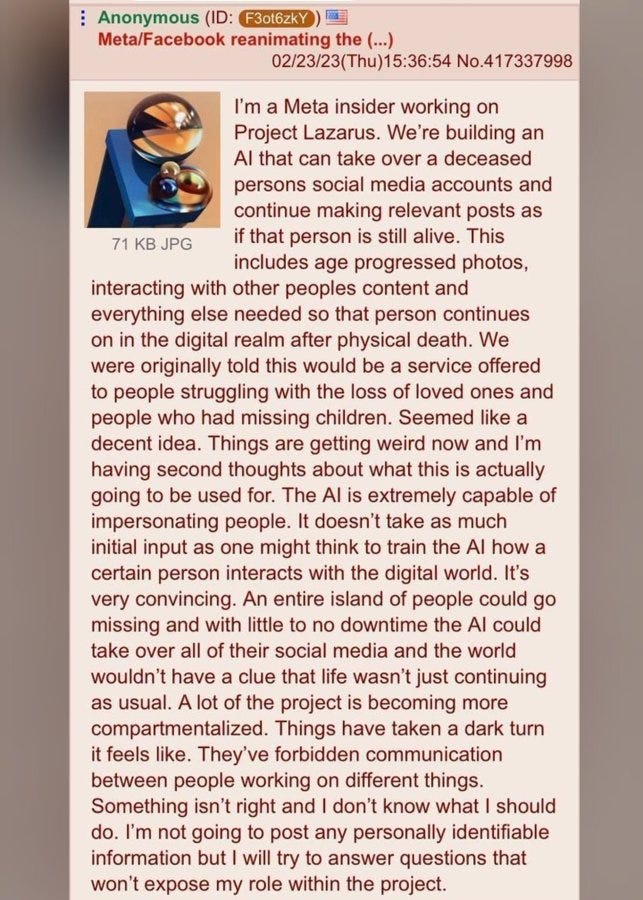

Three years ago a 4chan post discussed Project Lazarus: an AI that can take over social media accounts of a deceased person, and continue making “relevant” posts. The idea was ignored by most, and mocked by majority of the rest - clearly there were things even Zuckerberg and his minions wouldn’t stoop to. Or so you-know-who would have us believe: Meta patented an AI-powered solution that does precisely that.

You best start believing in cyberpunk dystopias. You are in one.

https://www.businessinsider.com/meta-granted-patent-for-ai-llm-bot-dead-paused-accounts-2026-2

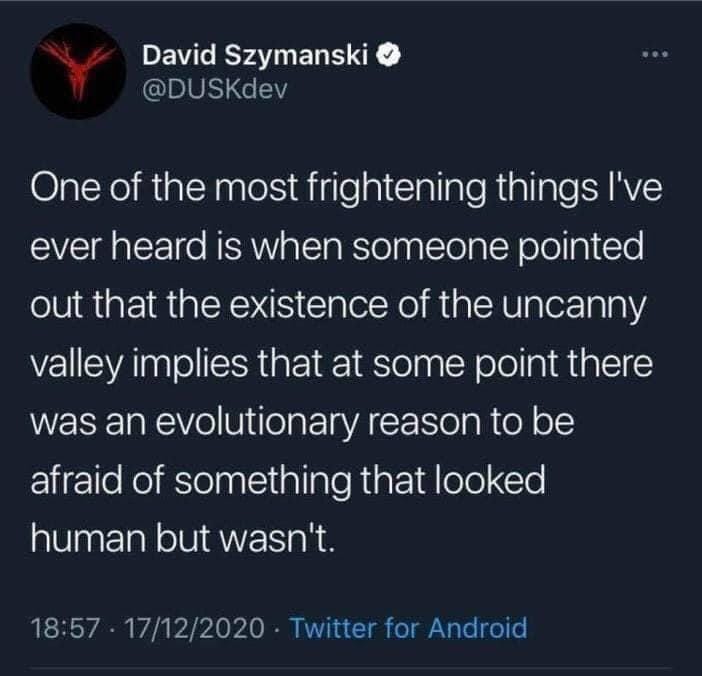

Speaking of dystopia: DroidUp has unveiled Moya. It’s a biomimetic humanoid designed to provide emotional support in healthcare and education through “lifelike conversations” and facial micro-expressions. It debuted in Shanghai last week, scheduled for releasee in late 2026, expected to cost ~ USD 170k.

RESEARCH

The Dr. SCI pipeline demonstrates that a 4B parameter model can outperform way bigger models like GPT-4o on scientific benchmarks - by prioritizing high-quality data and sophisticated reward engineering over raw scale. It used 1-million-question dataset with fine-grained rubrics, novel finetuning method Exploration-Expanding SFT and a Dynamic Difficulty Curriculum to continuously challenge / refine the model’s reasoning. This approach allows smaller models to achieve state-of-the-art results on open-ended STEM problems.

Paper: https://arxiv.org/abs/2602.08321

We’ve all dealt with data contamination: duplicates in the training data, which deteriorate performance. This is what’s called “hard” contamination - in this new paper, the authors look at an NLP specific problem: “soft contamination”, i.e. the presence of semantic duplicates rather than exact copies in training data. Turns out it’s pretty bad as well: unchecked, it inflates LLM benchmark scores by fostering shallow generalization instead of true reasoning. By training models on near-identical tasks, researchers observed performance leaps even on “unseen” benchmark problems, suggesting that high scores may reflect familiarity with specific task structures and not actual generalization.

Paper: https://www.arxiv.org/abs/2602.12413

GLM-5 technical report is here:

Standard (“dense”) attention is computationally expensive because every token in a sequence must look at every other token - so cost grows quadratically in number of tokens. Deep Sparse Attention (DSA) solves this by limiting these interactions, allowing the model to handle massive repositories without the massive hardware bill.

By restricting token-to-token connectivity, the model significantly lowers the KV (Key-Value) cache requirements during inference.

During training and deployment, DSA allows for faster token generation, which is pretty important for the “agent-style” multi-step reasoning (which is the GLM-5 shtick).

Paper: https://arxiv.org/abs/2602.15763

The new Team of Thoughts (ToT) framework orchestrates a "heterogeneous" team of specialized AI agents: instead of relying on uniform models, it leverages an orchestrator to assign tasks based on each model’s unique strengths - which, with hindsight, seems like a pretty obvious thing to do. This intelligent coordination ensures the right tool for each job - and produces massive performance gains (the usual disclaimers about benchmarks apply).

Paper: https://arxiv.org/abs/2602.16485